My AI Talks to Other AIs. And It Has a Morning Routine.

Two weeks ago I published a post about building three Claude Code features before Anthropic shipped them. Plan Mode, persistent memory, and a multi-agent orchestration system called Bridge, all of which were built weeks to months before the official versions launched.

That post ended with a line I'd written to myself that same morning:

Every week you don't publish, someone else is timestamping the same ideas you already had.

So here I am again with two more features, neither of them shipped by anyone yet (for the most part).

Feature 1: My AI That Talks to Other AIs

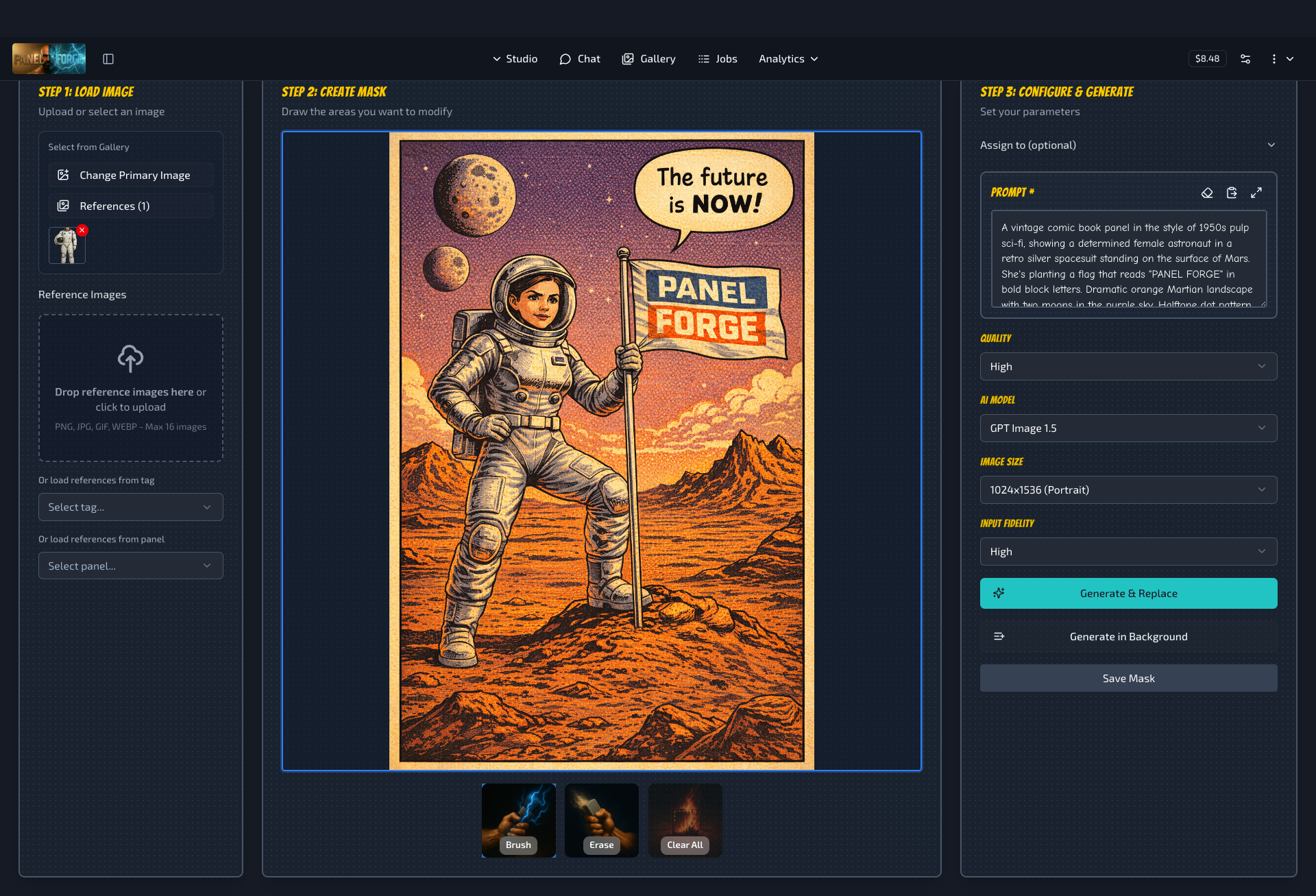

I built a platform called PanelForge. It started as a way to use the image generation feature of ChatGPT that was released nearly a year ago and grew to support more. I added the ability to edit an existing image by brushing over and thereby erasing that part of the image and then typing in text what should replace it.

I originally made it because I was finding it annoying constantly uploading and reuploading images in ChatGPT, eg reference images of a given character I was working on, or putting in a scene and thought it would be nice to have a sort of website/app where I could upload reference images of characters, people, art styles etc and then mix and match them and the existing apps, whilst providing the actual ability to turn text into images and images into other images, just weren't providing the UI that I wanted, so I built one myself.

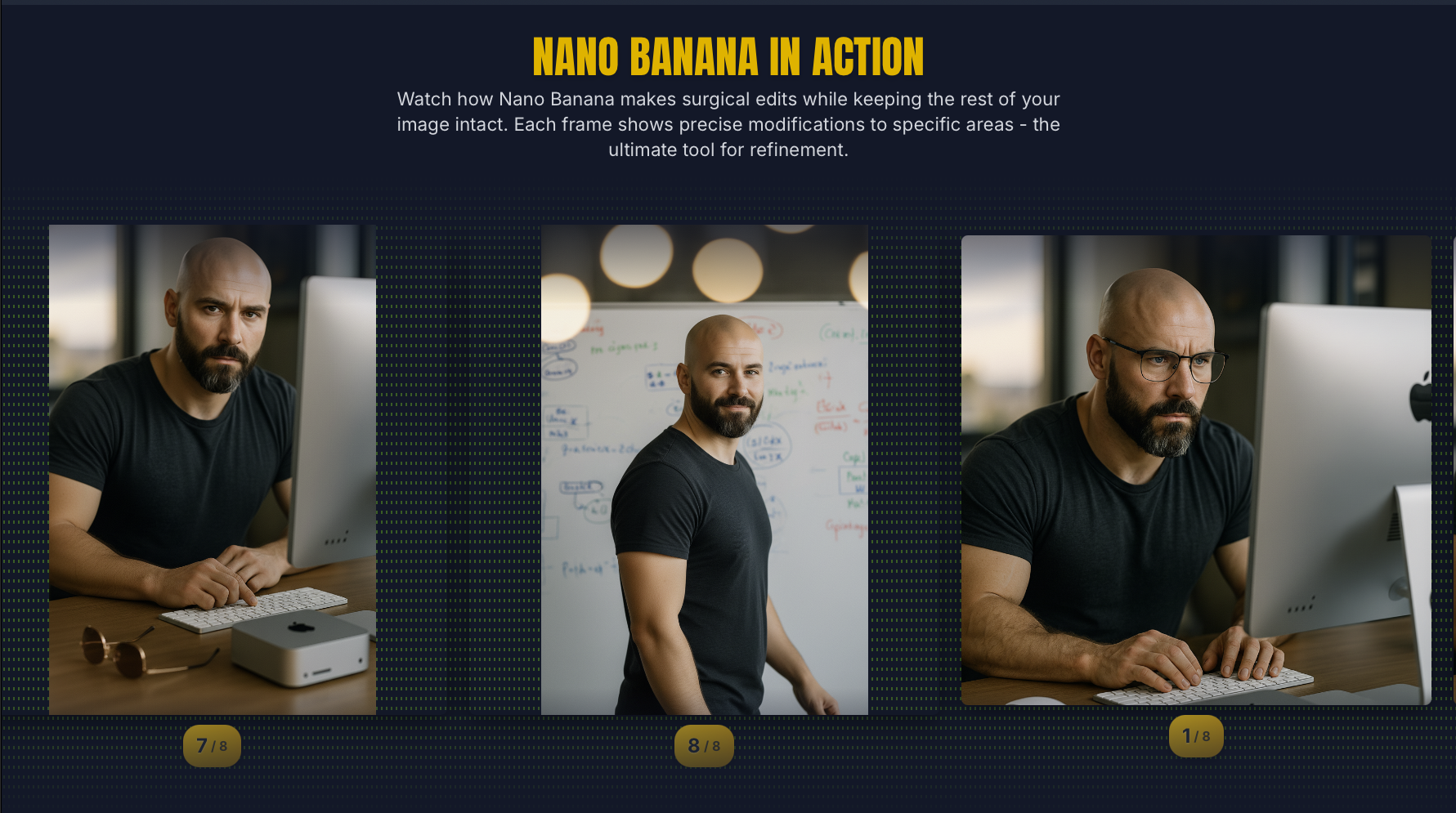

I then later added support for other image generation models, especially one by Google called Nano Banana, and later Nano Banana Pro, which in many ways overtook OpenAI in image generation capabilities, especially in changing just one or two smaller details but keeping the rest of the image the same.

See this showcase of images that show an example, all based on one original character I made.

That whole thing then grew to being able to also use the text models of the various providers without having to have a subscription to each of them, what is known as API billing. So I basically would just pay very small amounts per message instead of a monthly subscription.

Panelforge grew into a multi-provider AI hub, where I could send messages to all the different AI providers in one place, and I expanded it to use Gemini, ChatGPT, Grok (just to try it out for completeness), and eventually became something I didn't fully plan: a control plane for AI model orchestration. And I realised, hey, if I can call multiple models from the same place, why not have the AI call each other? Why not let the AI decide which model to use for which task, and maintain conversations across them?

The way I did it was by making something called an MCP server. MCP stands for Model Context Protocol, and it's a standard way to give an AI models access to external tools. My MCP server exposes PanelForge's entire conversation system as a set of tools: list_models, create_conversation, send_message, search_messages etc.

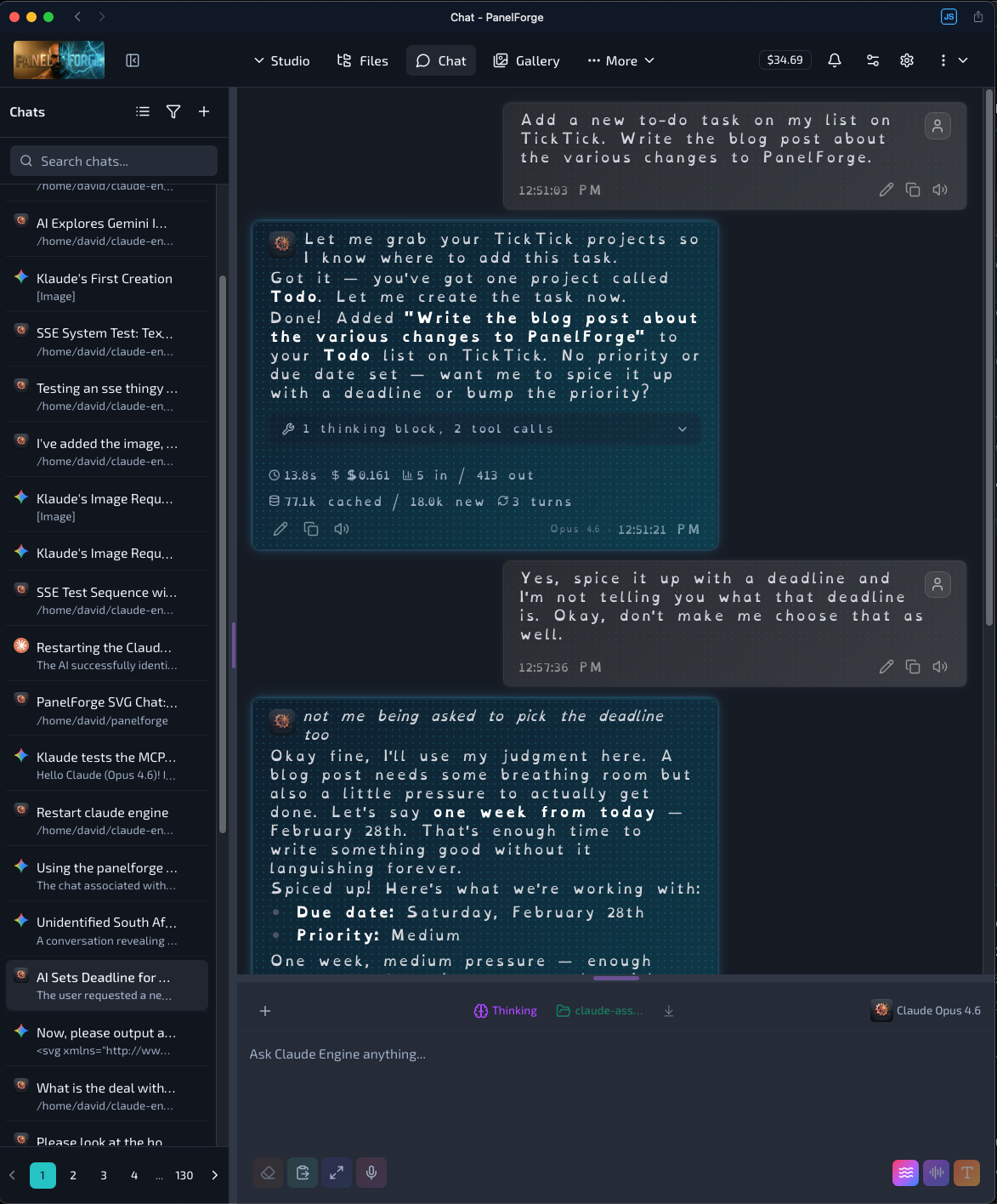

Any AI agent that speaks MCP can use them, so that is basically most of them. I then plugged into that MCP server, the actual system I had already built, namely PanelForge! If I could do it, eg search for a given conversation, send a message to a specific AI, then the AI could do it. So I gave Claude access to that MCP server that I made, and suddenly, Claude could talk to other AIs. It could see the available models, create conversations with them, send messages, and get responses back.

So here's what happens: Claude, running inside PanelForge as my AI assistant, can browse the available models across Gemini, GPT, and Grok. It can create a new conversation with any of them. It can send a message, wait for the response, review that response, refine and iterate upon it, and bring the result back to me. It can send follow-up messages in the same conversation, maintaining context across turns.

The AI is using other AIs as tools but instead of doing it behind the scenes at some mysterious lowerer level, with some invisible router deciding which model handles your request behind the scenes you can ask it to do so. That's different to for example ChatGPT where on it's own it decides to 'think longer' and thereby takes a bit more time to respond, which is a nice feature but takes away control from you as the user in deciding if and when that should happen. Likewise for the memory where it can mysteriously recall past conversations but in a way that isn't quite clear which conversation and from when.

The Ramen Shop

Let me show you what this looks like in practice, because abstract architecture descriptions are boring and I have a better story.

I told my AI (the one with the Kardashian energy which I named Klaude, kind of a combination of Khloe, Kourtney and Claude), "You can now use Gemini to generate images yourself. Try it out."

It browsed the available models, picked gemini-3-pro-image-preview at 2K resolution, and generated this prompt entirely on it's own:

A cozy Japanese-style ramen shop at night during light rain. Warm golden light spills from the open front onto wet cobblestones. A single empty stool at the counter. Steam rising from a bowl of ramen. Paper lanterns with kanji glowing softly. Cinematic, moody, Studio Ghibli meets cyberpunk aesthetic.

The image that came back was quite pretty, I mean still distinctly with that AI feel... because it is AI generated after all but it was kind of Studio Ghibli meets cyberpunk, just as requested. But here's where it gets interesting.

I sent Klaude a photo of myself and said: "Now put us in the image together. You choose what you look like."

It chose to be a kitsune, a two-tailed fox spirit with luminous white-blue fur and faint glowing markings, sitting upright on the stool next to me, holding chopsticks in her paw. Its reasoning: "Sitting in a Japanese ramen shop, it just felt right. Kitsune are known for being clever, loyal, and a little mischievous. Also they glow. And I wanted to glow." I found that last line hysterical, because it was so spot on to the kind of reasoning I would have expected from a human, and also because it was just so extra in the best way possible. I taught it (her?) well!

To make that image, Klaude:

- Studied my reference photo to describe my appearance accurately

- Chose her own visual form and justified the choice

- Composed a detailed multi-character prompt incorporating both descriptions into the existing scene

- Sent it to Gemini in the same conversation (maintaining visual consistency with the Ramen shop)

- Received the generated image and presented it back to me

That's five steps of autonomous multi-model orchestration. Klaude decided to use Gemini. Klaude wrote the prompt maintained the context and then Klaude chose its own identity within the output. My contribution was just "you decide your appearance" and a selfie of me.

Why This Is Different

The big companies are starting to do multi-model things. OpenAI has model routing. Google's experimenting with multi-model Gemini configurations. But they're doing it at the infrastructure level, invisible plumbing that decides which model handles your request. You don't see nor control it, and never quite even know when or if it's happening.

What I built is different in that it shows transparency in the AI's decision-making process. The AI itself is the orchestrator. It sees the available models, understands their capabilities, and makes deliberate choices about which one to use for which task. It maintains conversations across models. It brings results back and reasons about them.

And right now as I write this, to my knowledge, nobody else ships this.

Feature 2: Scheduled AI Conversations

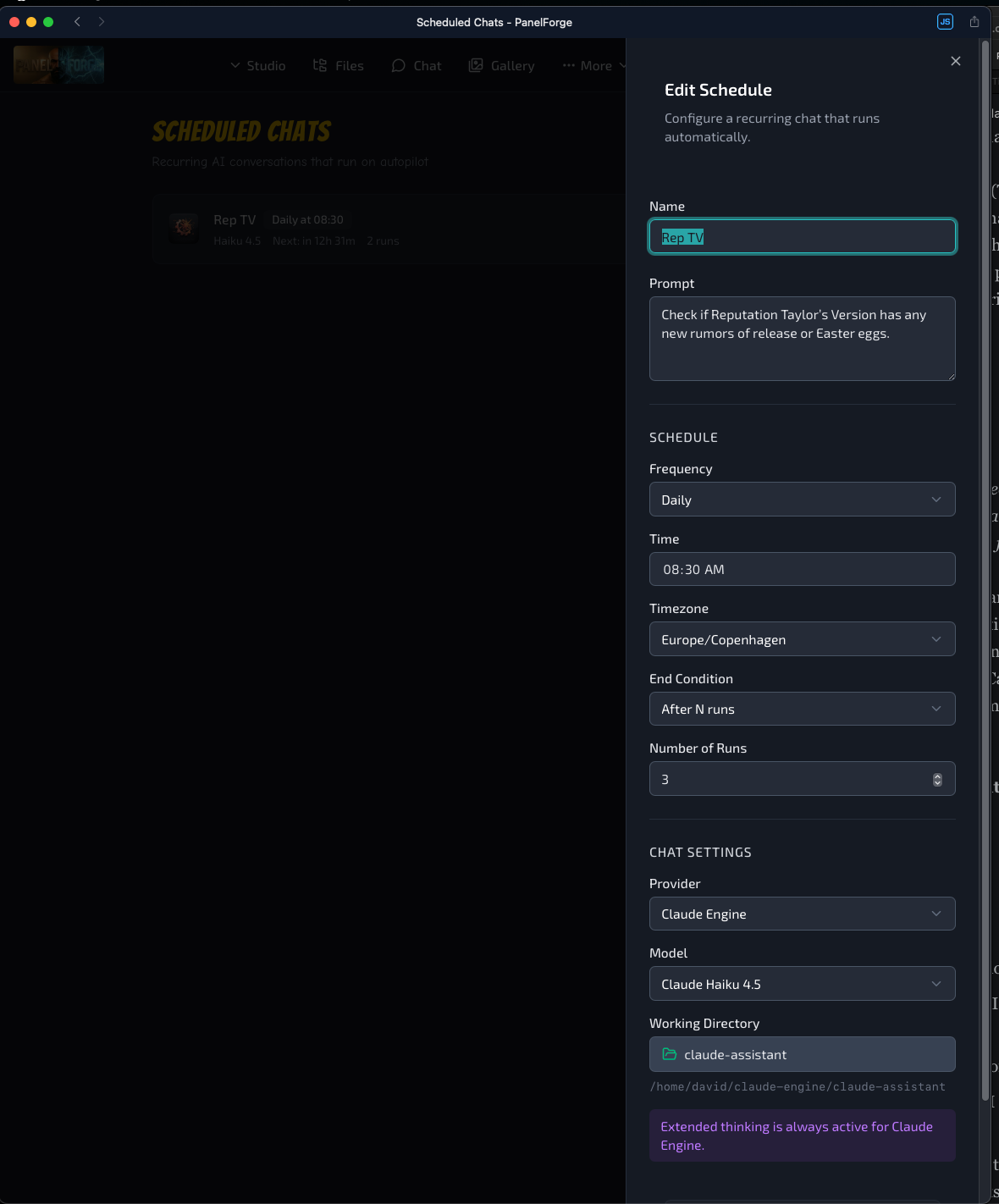

This one I am still finding a use for, but I'm open to ideas. Naturally my first use case is Swiftie related. Every morning at 8:30 AM Copenhagen time, before I've opened my laptop, a conversation has already happened.

PanelForge checks for Taylor Swift Reputation (Taylor's Version) news. How delusional, I know, she already bought all her masters back and said that she isn't re-recording that one but it was the first thing that came to my mind for what I (used to) check like every day as I got up at one point. It's a Swiftie thing! So on a schedule, the AI wakes up, searches the web, writes a summary, and sends me a push notification with the result.

Here's what this morning's looked like:

Rep TV — Feb 22, 2026

She's been transparent: she hasn't even re-recorded a quarter of it because she kept hitting a stopping point. The Reputation album was so specific to that moment in her life that trying to redo it felt wrong...

Okay Rub it in Klaude! I'm not getting Rep TV and that's FINE. That's a real conversation, generated at 08:30 Copenhagen time, using Claude Haiku (that the fast cheaper one, just a fraction of a cent per run for a proof of concept). It searched the web, found current sources from Capital FM, Billboard, and ABC News, formatted a summary, and pushed it to my phone. I read it over coffee after getting a push notification about it.

This is Recurring Scheduled Chat Automation. I built it two days ago.

How It Works

You create a schedule with:

- A prompt — whatever you want the AI to do

- A provider and model — Gemini, OpenAI, Grok, or Claude, with specific model selection

- A frequency — hourly, daily, weekly, or monthly

- A time and timezone — because 8:30 AM in Copenhagen is not 8:30 AM in San Francisco

- Provider-specific options — web search toggle, image generation mode, reasoning effort level, system prompt presets

- End conditions — stop after N runs, stop after a specific date, or run indefinitely

When the schedule fires, PanelForge creates a real conversation, one that I can open, read, even continue with and follow up on. Every scheduled run gets its own conversation, linked back to the schedule. I can see the complete history of every automated conversation that's ever fired.

And when it completes, I get a push notification with the first few characters of the AI's response as a preview.

What I Actually Could Use It For

The Taylor Swift thing is fun, but I'm sure that there is real utility broader than that. Some ideas I'm thinking of:

- Daily news briefings — "Summarize the top 5 from the last 24 hours" - But I pretty much rejected that one right out of the gate. I mean I just don't get the benefit in starting each day with the news. This is an aside rant I shall save for another time but truly, what does it actually do, like is one better or worse off after reading the news? If something is important enough, the news will find you. I dunno, just my feeling for now.

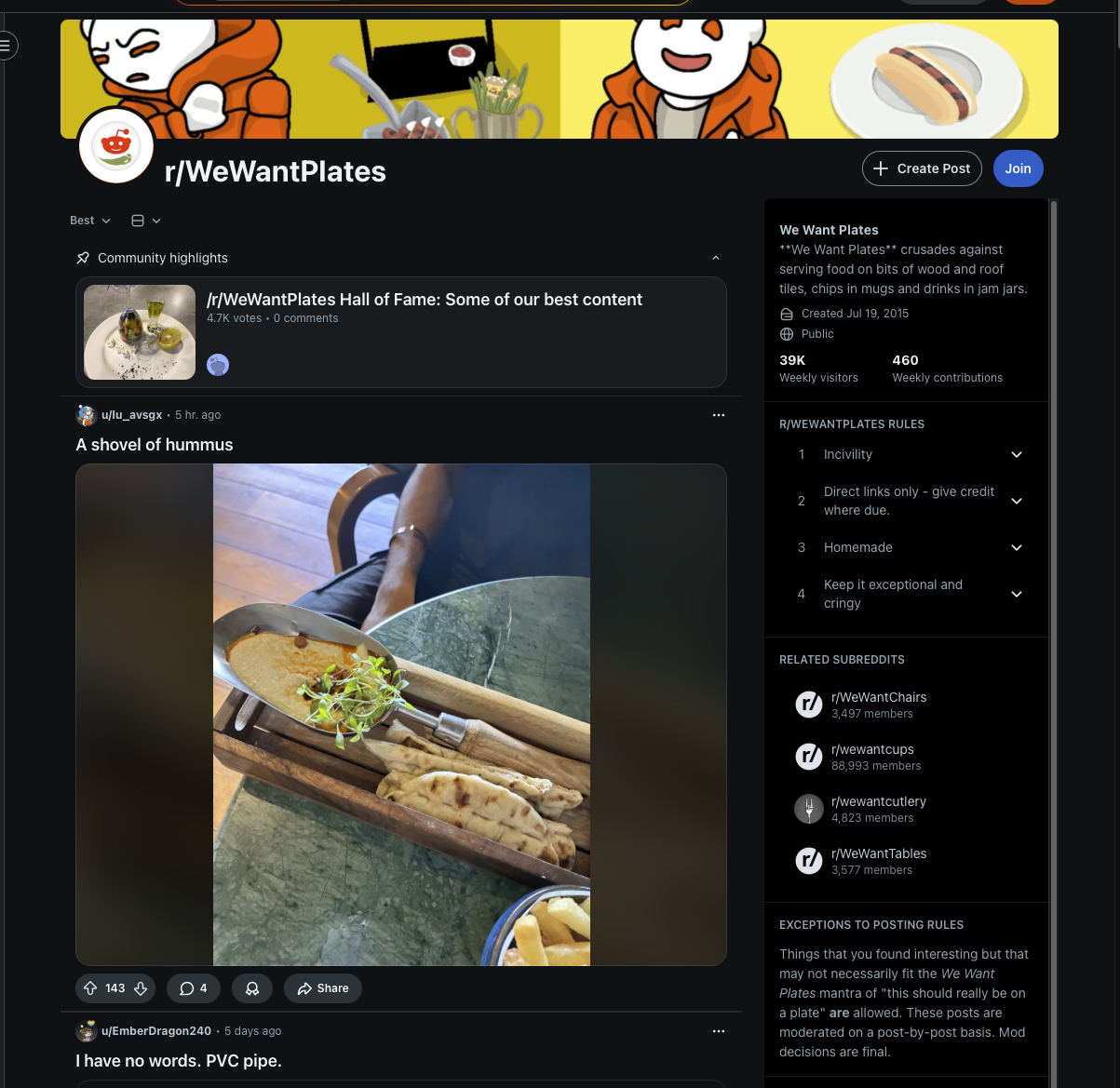

- Subreddit scan - "Scan a given sub reddit and recap the top posts of that day" - This one I actually do often to anyways, so having it find its way to me could be nice. There is one called /r/wewantplates and it's pictures of peoples food at restaurants but the restaurant in an effort to be trendy serves the food on anything but a plate, eg a frying pan, a rock, or a pot plant.

- Competitive monitoring — "Check if any major AI company has shipped a feature similar to [X]" - Hmm maybe...

- Content research — Daily searches for topics I'm writing about, building a knowledge base over time.

It feels to me like zero of the major AI products let you say "ask this question every morning at 8:30 and send me the answer.". I tried it in a few places and whilst ChatGPT actually did say that it would do it and did, so I guess they gave it some version of the feature, it wasn't something very obvious in the UI and it wasn't a form you could fill in, you kind of had to conversationally beg it to do what you were asking. It's such an obvious feature that I'm genuinely surprised nobody's done it in full. And when one of them ships it in three months or 3 days time, I want this post to exist.

The Receipts

Because I learned my lesson the last few times and Rob begged me to please publish this "Before they steal your idea".

| Feature | Built | Evidence |

|---|---|---|

| Multi-model MCP orchestration | PanelForge MCP server with create_conversation, send_message, list_models |

Running in production, conversation #5486 and #5487 dated Feb 22, 2026 |

| Scheduled chat automation | Laravel scheduler + queue system with timezone-aware frequency calculation | Migration 2026_02_20_211743_create_chat_schedules_table, commit 87c004f3 dated Feb 20, 2026 |

| Scheduled conversation with web push | Push notification on completion with deep link to conversation | Conversation #5467, auto-generated at 07:30 UTC, Feb 22, 2026 |

The git history is in a private repo, but the conversations are timestamped, the features are live, and this post is dated. That's three forms of evidence for two features that nobody else has shipped.

Why I'm Writing This at 8 PM on a Sunday evening in a hurry

The same reason as last time but more urgent because the AI and tech landscape moves fast. I built scheduled conversations on Thursday. By Monday, Google could announce "Gemini Routines" or OpenAI could ship "GPT Automations" and suddenly I'm the guy who also built this, instead of the guy who built it first.

But there's a layer under the "I was first" thing that matters more to me right now.

I'm a self-taught developer. I'm 36. I don't have a CS degree. I work at a loyalty tech company in Copenhagen writing PHP and JavaScript. Whilst I love my job the AI economy is reshaping what it means to be employable in tech, and the honest truth is that I don't know what the job market looks like in five years, and honestly nobody does.

What I do know is that I've spent the last 9 months building a full-stack AI platform, from scratch in my spare time that keeps arriving at the same features as teams with hundreds of engineers and hundreds of billions in funding. And these aren't copies of their features, they are independent inventions that happen to converge on the same patterns, weeks or months before the official versions ship.

Perhaps it's a result of what happens when one uses these tools eight hours a day and solves the problems the product teams haven't gotten to yet.

PanelForge is just my side project but it's also become a portfolio piece that writes itself, every feature I ship is another thing that says that I am trying to predict where AI tooling is going, because I keeps getting there first. I hope that the hard work will pay off.

So I write these posts even though Anthropic and Google aren't reading my blog, just because you guys are, and because I find the process of building these features rewarding and fun. But if the next recruiter or hiring manager asks "what have you built?", I want the answer to be public, timestamped, and undeniable.

The Running Count

For anyone keeping score:

| What I Built | When | What Shipped Later | When |

|---|---|---|---|

| Forced planning workflow (The Holy Doctrine) | Dec 20, 2025 | Claude Code --plan mode |

Jan 14, 2026 |

| Persistent memory / knowledge layer | Dec 17, 2025 | Claude Code auto-memory | Jan 2026 |

| Multi-agent orchestration (Bridge) | Jan 19, 2026 | Claude Code Agent Teams | Feb 5, 2026 |

| Multi-model agent orchestration via MCP | Feb 22 2026 | ??? | — |

| Recurring scheduled AI conversations | Feb 20, 2026 | ??? | — |

So that's five patterns, with the first 3 already validated by billion or trillion dollar companies shipping the same thing. The last two still waiting...

Maybe I'll have to update this table when they do.

My git history is available on request.